Figure: Home office with height-adjustable table

Figure: Home office with height-adjustable table

I want to optimize how I work when traveling, as I am lucky enough to do that occasionally. Hobbyist digital nomad. This is a small guide on how I set up a to-go workstation.

I hate working from my laptop only. My posture is shit, the screen feels claustrophobic, and the keyboard (especially Mac) feels terrible. So I need something portable to compete with my nice monitor-arm setup at home. The idea is to get a portable monitor and a tripod.

Figure: Setup and productive. Ready to dive into the code. My work laptop is a Mac though.

Figure: Setup and productive. Ready to dive into the code. My work laptop is a Mac though.

Figure: Setup is packed and ready to travel, for the next work-friendly adventure

Figure: Setup is packed and ready to travel, for the next work-friendly adventure

Here’s an interesting hack: I use the standard VESA mount on the back of my monitor to attach it to a tripod. After researching Reddit, I found the NEBULA tripod—typically used for holding projectors—provides excellent stability and sturdiness for this setup. To make the connection, I use a camera/tripod cheese plate with 75x75 M4 screws, which fits perfectly for mounting the monitor onto the tripod. The M4 screws didn’t fit perfectly, but adding a washer made everything tight and stable. The trick was found here, by user/arbitraryusername10.

Figure: Travel monitor attached to a cheese board, making it tripod friendly

Figure: Travel monitor attached to a cheese board, making it tripod friendly

Mouse and keyboard

I am a creature of habit, and I prefer to use the same keyboard and mouse when working from home, in the office, and on the go. Also, when I play on the computer, which is why my mouse and keyboard are a bit overkill. I am using Logitech G915 TKL keyboard and Logitech Pro Wireless mouse.

USB Hub

I use a UNI USB-C hub that can handle a USB-C charger, so I can use it as a hub and charge my laptop simultaneously.

Webcam, microphone and sound

I don’t need a webcam; my laptop has one. And for the microphone and sound, I use “Bose QuietComfort Earbuds,” which are nice with noise-cancellation.

Monitor

There are many options, in varying price ranges. Here are some notes to look out for.

- Choose an inch size that is comfortable for you when traveling. I suggest 16-18” range.

- Get anything with a 1920x1080 (1080p) resolution. Usually, it is the same resolution, no matter the screen’s physical size.

- It needs VESA mount, preferably with M4 screw size.

- You can use a VESA mount with two or four screws. My experience is two is enough when the screw is thick enough.

- Note the length of the screw, as it needs to be precise. Use washers when required.

⚠️ WARNING: Do not buy a monitor with M3 screw size VESA mount. The screws are too small and will not hold anything. I tried it, and with any weight, it fell apart.

Note: ASUS made a ZenScreen MB16QHG Portable Monitor, which seems interesting because it has a built-in tripod mount. However, it is a bit expensive.

My personal shopping list

Disclaimer: These are amazon affiliation links. First time doing it, want to see if it makes a difference.

- Portable Monitor 18.5 Inch https://amzn.to/4b9Nkjj

- CAMVATE Cheese Plate (two-screw VESA mount) https://amzn.to/3XCxs3t or CAMVATE Cheese Plate with 75x75mm (four-screw VESA mount) https://amzn.to/4kpPO1k

- M4 Screw pack https://amzn.to/41sayxK

- NEBULA Capsule Tripod https://amzn.to/41qPaJn

- VVGAOGES Aluminium Laptop Stand https://amzn.to/41tNLlp

- UNI USB-C Hub (can’t remember where I bought it)

Total price for my setup: ~250 EUR, excluding work laptop.

References:

- Inspiration source: HT arbitraryusername10 https://www.reddit.com/r/digitalnomad/comments/1fv8fwx/lightweight_travel_setup_for_a_secondary_monitor/

- https://ternsetups.com/ Looks good, but I wanted bigger screen, and be able to substitute it.

- https://www.asus.com/ch-en/displays-desktops/monitors/zenscreen/asus-zenscreen-mb16qhg/ Interesting monitor from ASUS. Expensive.

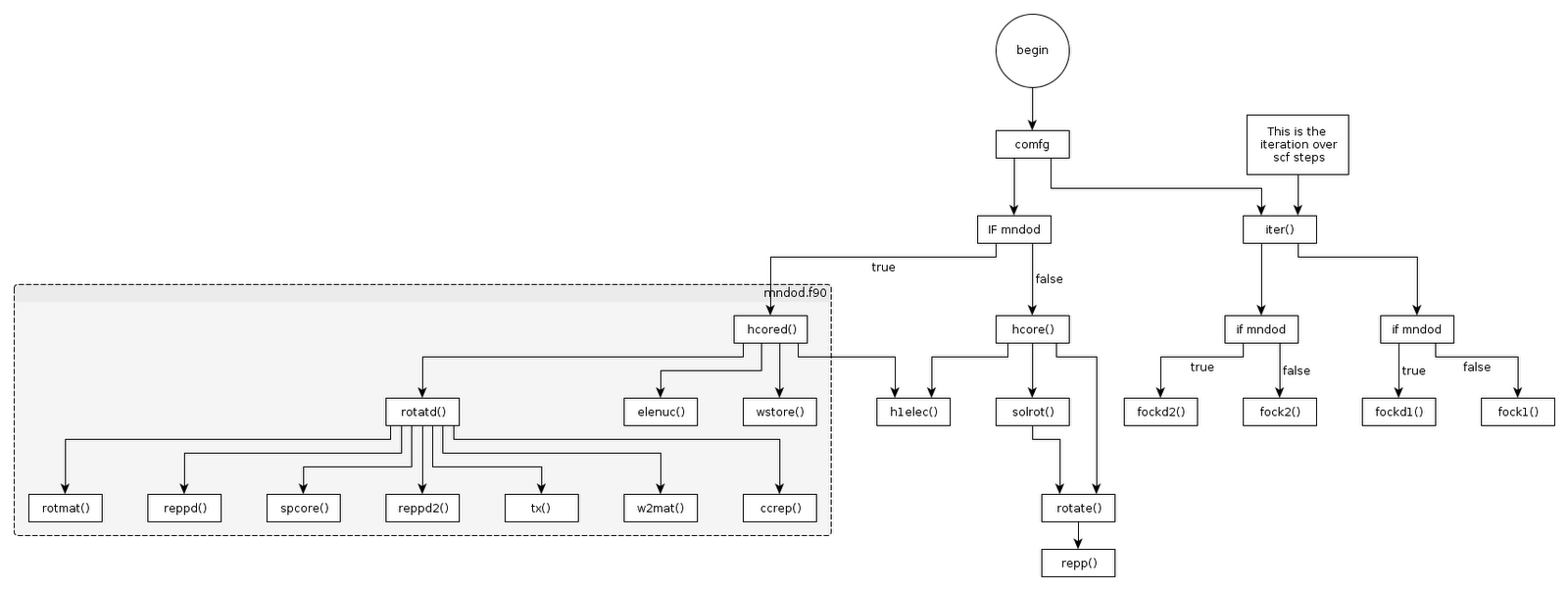

Figure 1: Subroutine map of MNDO in MOPAC

Figure 1: Subroutine map of MNDO in MOPAC Figure 2: Subroutine map of MNDO in MOPAC

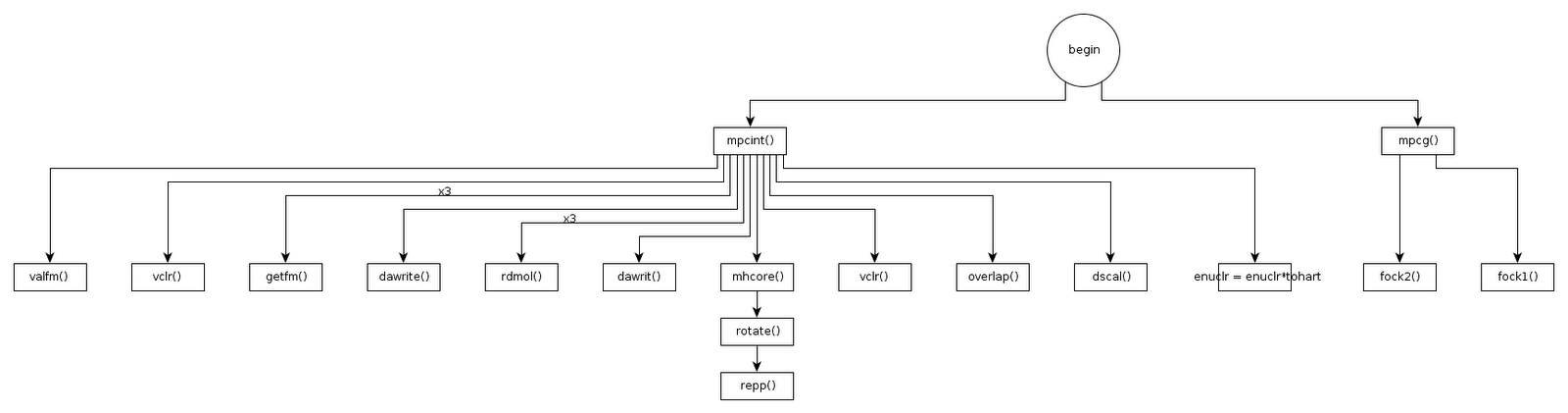

Figure 2: Subroutine map of MNDO in MOPAC Figure 3: Subroutine map of MNDO in MOPAC

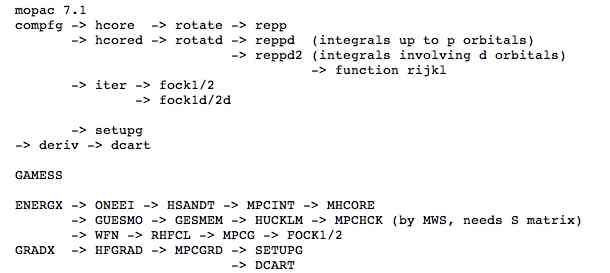

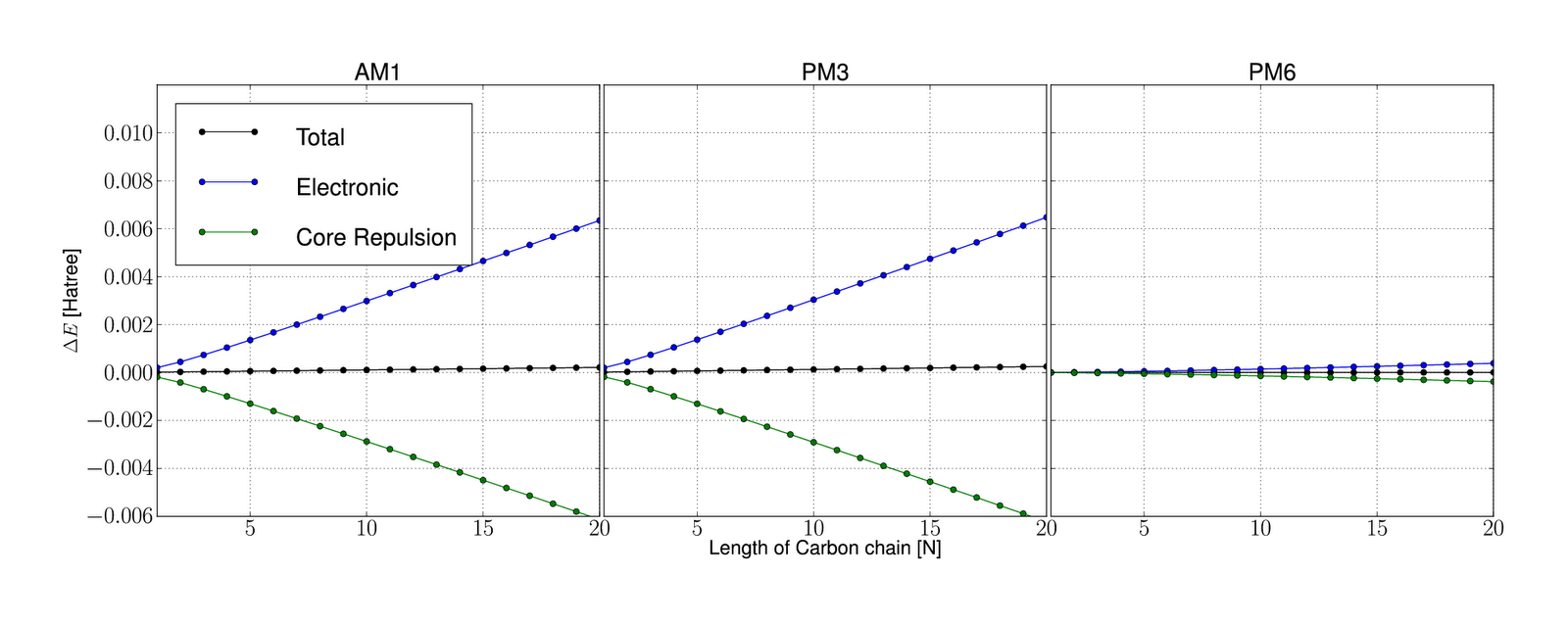

Figure 3: Subroutine map of MNDO in MOPAC Figure 1: Errors are looking bad

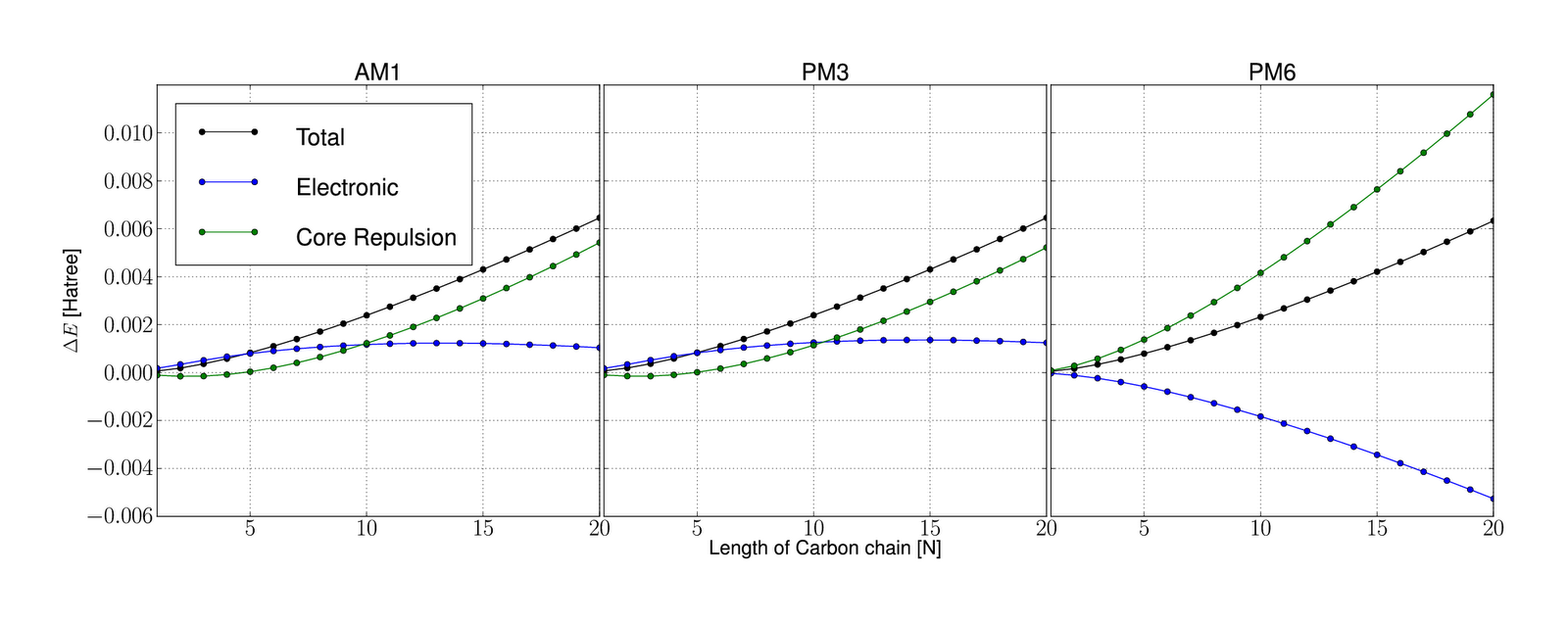

Figure 1: Errors are looking bad Figure 2: Errors are looking .. better

Figure 2: Errors are looking .. better